Introduction

Selecting the best GPU is a vital resolution when operating machine studying and LLM workloads. You want sufficient compute to run your fashions effectively with out overspending on pointless energy. On this put up, we evaluate two strong choices: NVIDIA’s A10 and the newer L40S GPUs. We’ll break down their specs, efficiency benchmarks in opposition to LLMs, and pricing that can assist you select primarily based in your workload.

There may be additionally a rising problem within the trade. Practically 40% of corporations battle to run AI initiatives resulting from restricted entry to GPUs. The demand is outpacing provide, making it tougher to scale reliably. That is the place flexibility turns into necessary. Counting on a single cloud or {hardware} supplier can decelerate your initiatives. We’ll discover how Clarifai’s Compute Orchestration helps you entry each A10 and L40S GPUs, supplying you with the liberty to modify primarily based on availability and workload wants whereas avoiding vendor lock-in.

Let’s dive in and check out these two totally different GPU architectures.

Ampere GPUs (NVIDIA A10)

NVIDIA’s Ampere structure, launched in 2020, launched third-generation Tensor Cores optimized for mixed-precision compute (FP16, TF32, INT8) and improved Multi-Occasion GPU (MIG) assist. The A10 GPU is designed for cost-effective AI inference, laptop imaginative and prescient, and graphics-heavy workloads. It handles mid-sized LLMs, imaginative and prescient fashions, and video duties effectively. With second-gen RT Cores and RTX Digital Workstation (vWS) assist, the A10 is a strong alternative for operating graphics and AI workloads on virtualized infrastructure.

Ada Lovelace GPUs (NVIDIA L40S)

The Ada Lovelace structure takes efficiency and effectivity additional, designed for contemporary AI and graphics workloads. The L40S GPU options fourth-gen Tensor Cores with FP8 precision assist, delivering vital acceleration for giant LLMs, generative AI, and fine-tuning. It additionally provides third-gen RT Cores and AV1 {hardware} encoding, making it a powerful match for advanced 3D graphics, rendering, and media pipelines. Lovelace structure permits the L40S to deal with multi-workload environments the place AI compute and high-end graphics run aspect by aspect.

A10 vs. L40S: Specs Comparability

Core Depend and Clock Speeds

The L40S includes a increased CUDA core depend than the A10, offering better parallel processing energy for AI and ML workloads. CUDA cores are specialised GPU cores designed to deal with advanced computations in parallel, which is crucial for accelerating AI duties.

The L40S additionally runs at a better increase clock of 2520 MHz, a 49% enhance over the A10’s 1695 MHz, leading to sooner compute efficiency.

VRAM Capability and Reminiscence Bandwidth

The L40S provides 48 GB of VRAM, double the A10’s 24 GB, permitting it to deal with bigger fashions and datasets extra effectively. Its reminiscence bandwidth can be increased at 864.0 GB/s in comparison with the A10’s 600.2 GB/s, enhancing knowledge throughput throughout memory-intensive duties.

A10 vs L40S: Efficiency

How do the A10 and L40S evaluate in real-world LLM inference? Our analysis workforce benchmarked the MiniCPM-4B, Phi4-mini-instruct, and Llama-3.2-3b-instruct fashions operating in FP16 (half-precision) on each GPUs. FP16 permits sooner efficiency and decrease reminiscence utilization—excellent for large-scale AI workloads.

We examined latency (the time taken to generate every token and full a full request, measured in seconds) and throughput (the variety of tokens processed per second) throughout numerous situations. Each metrics are essential for evaluating LLM efficiency in manufacturing.

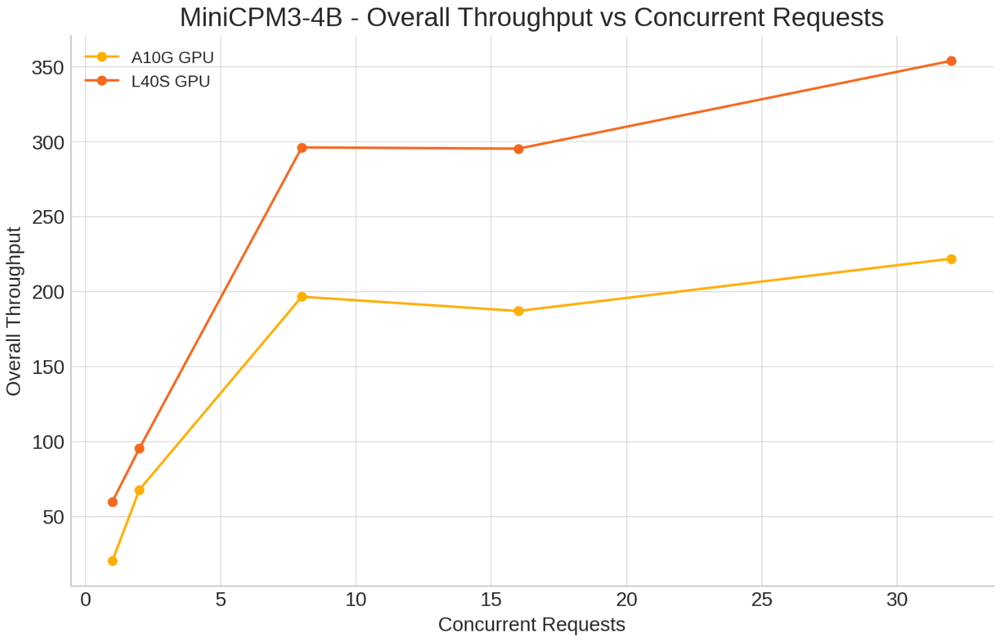

MiniCPM-4B

Eventualities examined:

- Concurrent Requests: 1, 2, 8, 16, 32

- Enter tokens: 500

- Output Tokens: 150

Key Insights:

-

Single Concurrent Request: L40S considerably improved latency per token (0.016s vs. 0.047s on A10G) and elevated end-to-end throughput from 97.21 to 296.46 tokens/sec.

-

Larger Concurrency (32 concurrent requests): L40S maintained higher latency (0.067s vs. 0.088s) and throughput of 331.96 tokens/sec, whereas A10G reached 258.22 tokens/sec.

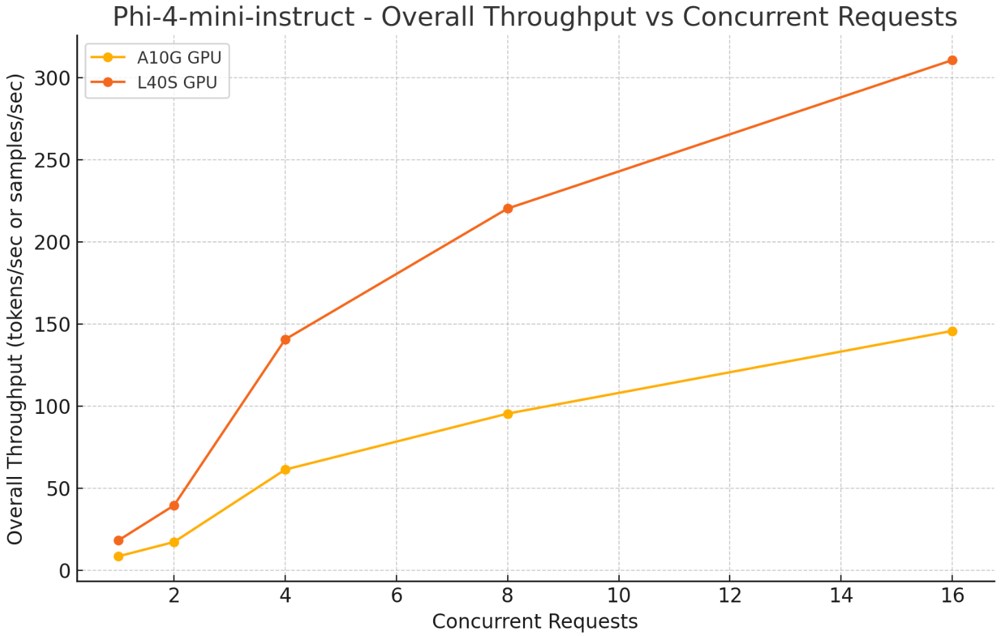

Phi4-mini-instruct

Eventualities examined:

- Concurrent Requests: 1, 2, 8, 16, 32

- Enter Tokens: 500

- Output Tokens: 150

Key Insights:

- Single Concurrent Request: L40S lower latency per token from 0.02s (A10) to 0.013s and improved general throughput from 56.16 to 85.18 tokens/sec.

- Larger Concurrency (32 concurrent requests): L40S achieved 590.83 tokens/sec throughput with 0.03s latency per token, surpassing A10’s 353.69 tokens/sec.

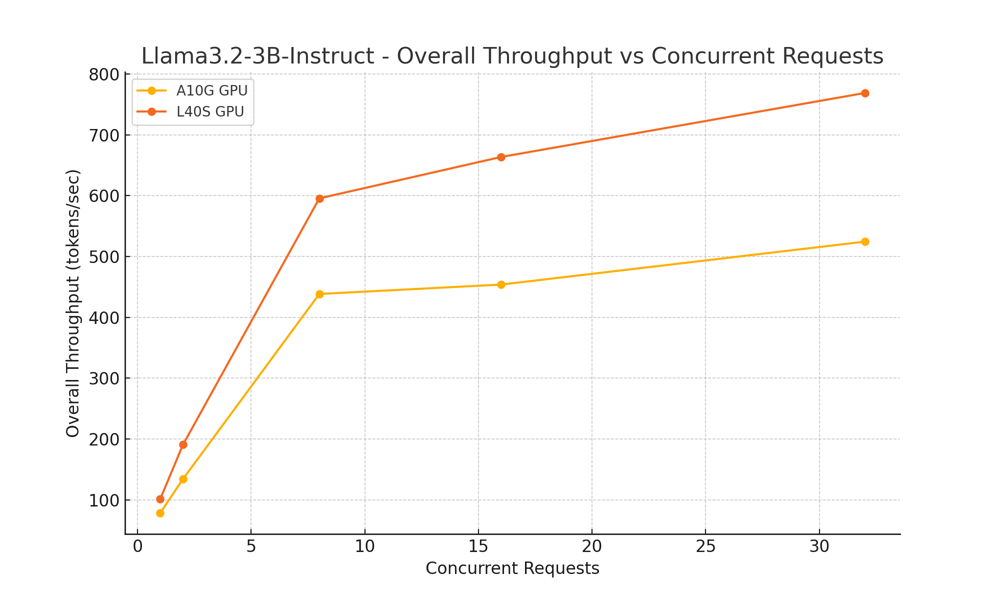

Llama-3.2-3b-instruct

Eventualities Examined:

- Concurrent Requests: 1, 2, 8, 16, 32

- Enter tokens: 500

- Output Tokens: 150

Key Insights:

- Single Concurrent Request: L40S improved latency per token from 0.015s (A10) to 0.012s, with throughput rising from 76.92 to 95.34 tokens/sec.

- Larger Concurrency (32 concurrent requests): L40S delivered 609.58 tokens/sec throughput, outperforming A10’s 476.63 tokens/sec, and lowered latency per token from 0.039s (A10) to 0.027s.

Throughout all examined fashions, the NVIDIA L40S GPU persistently outperformed the A10 in decreasing latency and enhancing throughput.

Whereas the L40S demonstrates robust efficiency enhancements, it’s equally necessary to think about elements corresponding to value and useful resource necessities. Upgrading to the L40S might require a better upfront funding, so groups ought to rigorously consider the trade-offs primarily based on their particular use instances, scale, and funds.

Now, let’s take a better have a look at how the A10 and L40S evaluate when it comes to pricing.

A10 vs L40S: Pricing

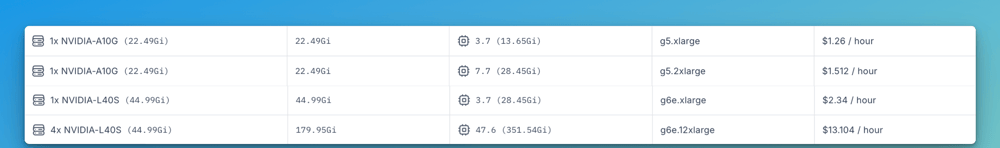

Whereas the L40S is extra highly effective than the A10, it’s additionally considerably costlier to run. Primarily based on Clarifai’s Compute Orchestration pricing, the L40S occasion (g6e.xlarge) prices $2.34 per hour, practically double the price of the A10-equipped occasion (g5.xlarge) at $1.26 per hour.

There are two variants accessible for each A10 and L40S:

- A10 is available in g5.xlarge ($1.26/hour) and g5.2xlarge ($1.512/hour) configurations.

- L40S is available in g6e.xlarge ($2.34/hour) and g6e.12xlarge ($13.104/hour) for bigger workloads.

Selecting the Proper GPU

Choosing between the NVIDIA A10 and L40S depends upon your workload calls for and funds issues:

- Nvidia A10 is well-suited for enterprises on the lookout for a cheap GPU able to dealing with blended workloads, together with AI inference, machine studying, {and professional} visualization. Its decrease energy consumption and strong efficiency make it a sensible alternative for mainstream purposes the place excessive compute energy is just not required.

- NVIDIA L40S is designed for organizations operating compute-intensive workloads corresponding to generative AI and LLM inference. With considerably increased efficiency and reminiscence bandwidth, the L40S delivers the scalability wanted for demanding AI and graphics duties, making it a powerful funding for manufacturing environments that require top-tier GPU energy.

Scaling AI Workloads with Flexibility and Reliability

We now have seen the distinction between the A10 and L40S and the way choosing the proper GPU depends upon your particular use case and efficiency wants. However the subsequent query is—how do you entry these GPUs on your AI workloads?

One of many rising challenges in AI and machine studying improvement is navigating the worldwide GPU scarcity whereas avoiding dependence on a single cloud supplier. Excessive-demand GPUs just like the L40S, with its superior efficiency, aren’t all the time available once you want them. However, whereas the A10 is extra accessible and cost-effective, availability can nonetheless fluctuate relying on the cloud area or supplier.

That is the place Clarifai’s Compute Orchestration is available in. It provides you versatile, on-demand entry to each A10 and L40S GPUs throughout a number of cloud suppliers and personal infrastructure with out locking you right into a single vendor. You’ll be able to select the cloud supplier and area the place you wish to deploy, corresponding to AWS, GCP, Azure, Vultr, or Oracle, and run your AI workloads on devoted GPU clusters inside these environments.

Whether or not your workload wants the effectivity of the A10 or the facility of the L40S, Clarifai routes your jobs to the sources you choose whereas optimizing for availability, efficiency, and value. This method helps you keep away from delays brought on by GPU shortages or pricing spikes and provides you the flexibleness to scale your AI initiatives with confidence with out being tied to 1 supplier.

Conclusion

Selecting the best GPU comes all the way down to understanding your workload necessities and efficiency targets. The NVIDIA A10 provides a cheap choice for blended AI and graphics workloads, whereas the L40S delivers the facility and scalability wanted for demanding duties like generative AI and huge language fashions. By matching your GPU option to your particular use case, you’ll be able to obtain the appropriate stability of efficiency, effectivity, and value.

Clarifai’s Compute Orchestration makes it simple to entry each A10 and L40S GPUs throughout a number of cloud suppliers, supplying you with the flexibleness to scale with out being restricted by availability or vendor lock-in.

For a breakdown of GPU prices and to check pricing throughout totally different deployment choices, go to the Clarifai Pricing web page. You may as well be part of our Discord channel anytime to attach with consultants, get your questions answered about choosing the proper GPU on your workloads, or get assist optimizing your AI infrastructure.